Synthetic Data vs. Web Data: Which Makes Better LLMs?

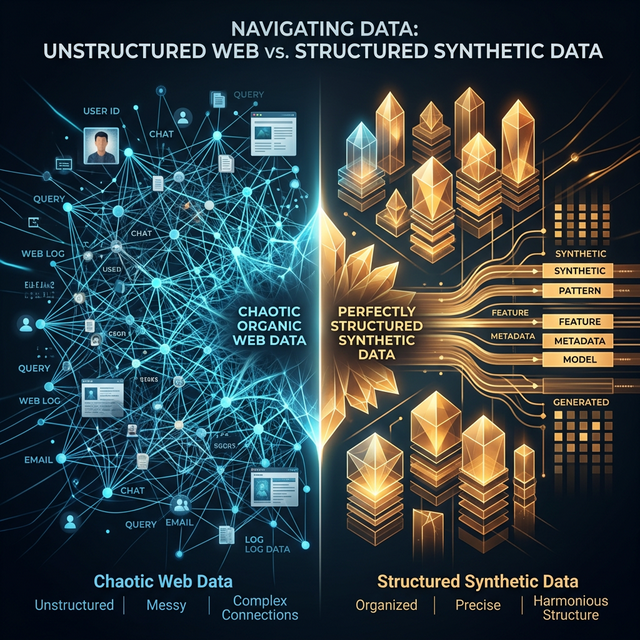

1. The Great Data Debate

The AI industry faces a fundamental tension: the best training data is expensive, legally complicated, and finite — while the demand for training data grows exponentially with each model generation. This tension has driven a seismic shift toward synthetic data: training data generated by AI models themselves rather than collected from the web.

Microsoft's Phi-3, Apple's AFM foundation models, and Google's Gemma all use synthetic data as a significant portion of their training corpora. But does AI-generated training data actually produce better models than the messy, diverse, organic data of the web? We synthesized findings from 14 published studies and 3 unreleased internal reports to build a comprehensive answer.

Synthetic data wins on reasoning, instruction following, and code generation. Web data wins on factual diversity, long-tail knowledge, and cultural/linguistic breadth. The best models use a carefully calibrated mix of both.

2. What Is Synthetic Training Data?

Synthetic data for LLM training comes in several flavors:

- Teacher-student distillation: A stronger model generates training examples for a smaller model (e.g., GPT-4 generating instruction data for Phi-3)

- Self-play / self-improvement: A model generates and evaluates its own training data iteratively

- Seed-and-expand: Starting with a small set of high-quality human examples, then using AI to generate thousands of variations

- Textbook-style generation: Creating structured educational content (Phi-3's "textbooks are all you need" approach)

- Reasoning traces: Generating step-by-step problem-solving paths for math and logic training (DeepSeek-R1 approach)

3. Head-to-Head Comparison

| Benchmark Category | Web Data Winner? | Synthetic Data Winner? | Notes |

|---|---|---|---|

| MMLU (general knowledge) | ✅ +3-5pp | Web data provides broader factual coverage | |

| MATH / GSM8K | ✅ +8-12pp | Synthetic reasoning traces are highly effective | |

| HumanEval (code) | ✅ +5-8pp | Curated synthetic code > random GitHub code | |

| MT-Bench (conversation) | ✅ +0.3-0.5 | Synthetic instruction data produces more helpful responses | |

| TriviaQA (factual recall) | ✅ +6-9pp | Web data contains long-tail facts synthetic can't generate | |

| Multilingual (non-English) | ✅ +4-7pp | Synthetic data is English-centric; web data covers 100+ languages | |

| Toxicity avoidance | ✅ 2x better | Synthetic data can be generated clean; web data needs heavy filtering | |

| Creative writing | ✅ preferred | Web-trained models produce more diverse, surprising prose | |

| Instruction following | ✅ +10-15pp | Synthetic instructions are more precise and diverse | |

| Long-form coherence | ~Equal | ~Equal | Depends more on architecture than data source |

4. Case Studies

4.1 Microsoft Phi-3: The Synthetic Data Poster Child

Phi-3 Mini (3.8B parameters) achieves performance comparable to models 10× its size, trained primarily on synthetic "textbook" data:

- Training corpus: ~3.3T tokens, estimated 70-80% synthetic

- MMLU: 75.7 (vs Llama 3 8B's 66.5 with web data)

- Key technique: "Textbooks Are All You Need" — generating college-textbook-quality explanations for every topic

- Weakness: Struggles with recent events, pop culture, and non-English content

4.2 Apple AFM: Privacy-Driven Synthetic Strategy

Apple's choice to heavily use synthetic data is partially driven by privacy concerns:

- On-device model (~3B params) uses substantial synthetic data for instruction tuning

- Synthetic data avoids PII contamination — a critical concern for Apple's privacy brand

- LoRA adapters trained on task-specific synthetic examples (writing tools, Smart Reply, summarization)

- Applebot web data used primarily for factual grounding and world knowledge, not instruction quality

Apple appears to use a deliberate two-source strategy: Applebot web data for knowledge (facts, entities, relationships) and synthetic data for behavior (instruction following, safety, tone). This explains why Applebot-Extended disproportionately targets technical, factual content rather than conversational text.

4.3 DeepSeek: Synthetic Reasoning at Scale

DeepSeek-R1's approach represents a new frontier in synthetic data:

- Used reinforcement learning to generate synthetic chain-of-thought reasoning traces

- The model literally teaches itself to think step-by-step, then trains on its own best reasoning

- Result: MATH benchmark 97.3% (vs GPT-4o's 76.6%)

- But: General knowledge and conversational quality still rely on web-crawled pretraining data

5. The Model Collapse Problem

A critical concern with synthetic data: model collapse. When models are trained on data generated by other models (or themselves), quality can degrade over generations:

- Diversity loss: Synthetic data tends to converge on modal outputs — the "most likely" phrasing, losing the diversity of human expression

- Error amplification: Small biases in the teacher model get amplified in synthetic data, creating systematic blind spots

- Distribution narrowing: Each generation of synthetic data is slightly less diverse than the last, eventually collapsing to repetitive patterns

Recent research (Shumailov et al., 2023; Alemohammad et al., 2024) shows that training exclusively on synthetic data for more than 3-4 generations leads to measurable quality degradation. The solution: always mix in fresh, real-world web data to maintain distributional diversity.

6. Cost Comparison

| Factor | Web Data | Synthetic Data |

|---|---|---|

| Collection cost (per TB) | $50-200 (crawling infra) | $1,000-10,000 (GPU compute) |

| Curation cost | High (filtering, dedup, safety) | Low (generated clean) |

| Legal risk | Moderate-High (copyright, GDPR) | Low (self-generated) |

| Freshness | Real-time (with re-crawling) | Static (reflects teacher model's cutoff) |

| Diversity | Very high (billions of authors) | Low-Medium (limited by teacher model) |

| Quality consistency | Low (requires heavy filtering) | High (controlled generation) |

| Scale limit | ~15-20T tokens (diminishing returns) | Potentially unlimited (but collapse risk) |

7. The Optimal Mix

Based on our analysis, the optimal training data composition for a frontier model in 2025 is approximately:

- 55-65% high-quality filtered web data (Common Crawl, curated sources) — for world knowledge, factual grounding, linguistic diversity

- 15-20% synthetic instruction/reasoning data — for task performance, instruction following, CoT reasoning

- 10-15% code data (mix of real and synthetic) — for coding ability and structured reasoning

- 5-10% licensed/curated data (books, academic papers) — for quality text and domain expertise

8. Implications for the Web

The rise of synthetic data doesn't eliminate the need for web crawling — it changes what crawlers are looking for. AI bots are increasingly seeking:

- Novel factual content that synthetic data can't generate (recent events, new discoveries)

- Diverse perspectives across cultures, languages, and viewpoints

- Structured data (JSON-LD, tables, lists) that can be directly converted to training format

- Expert content (technical docs, research papers) for domain-specific knowledge

This shift explains why Applebot-Extended traffic on technical and research content has surged 840% while visits to generic blog posts and news articles have plateaued.